Why most teams invert the pyramid, when that's actually fine, and how to build a test distribution that fits your real architecture instead of a textbook diagram.

The Sacred Geometry of Software Testing

Somewhere around 2009, Mike Cohn drew a triangle in a book and accidentally created a religion.

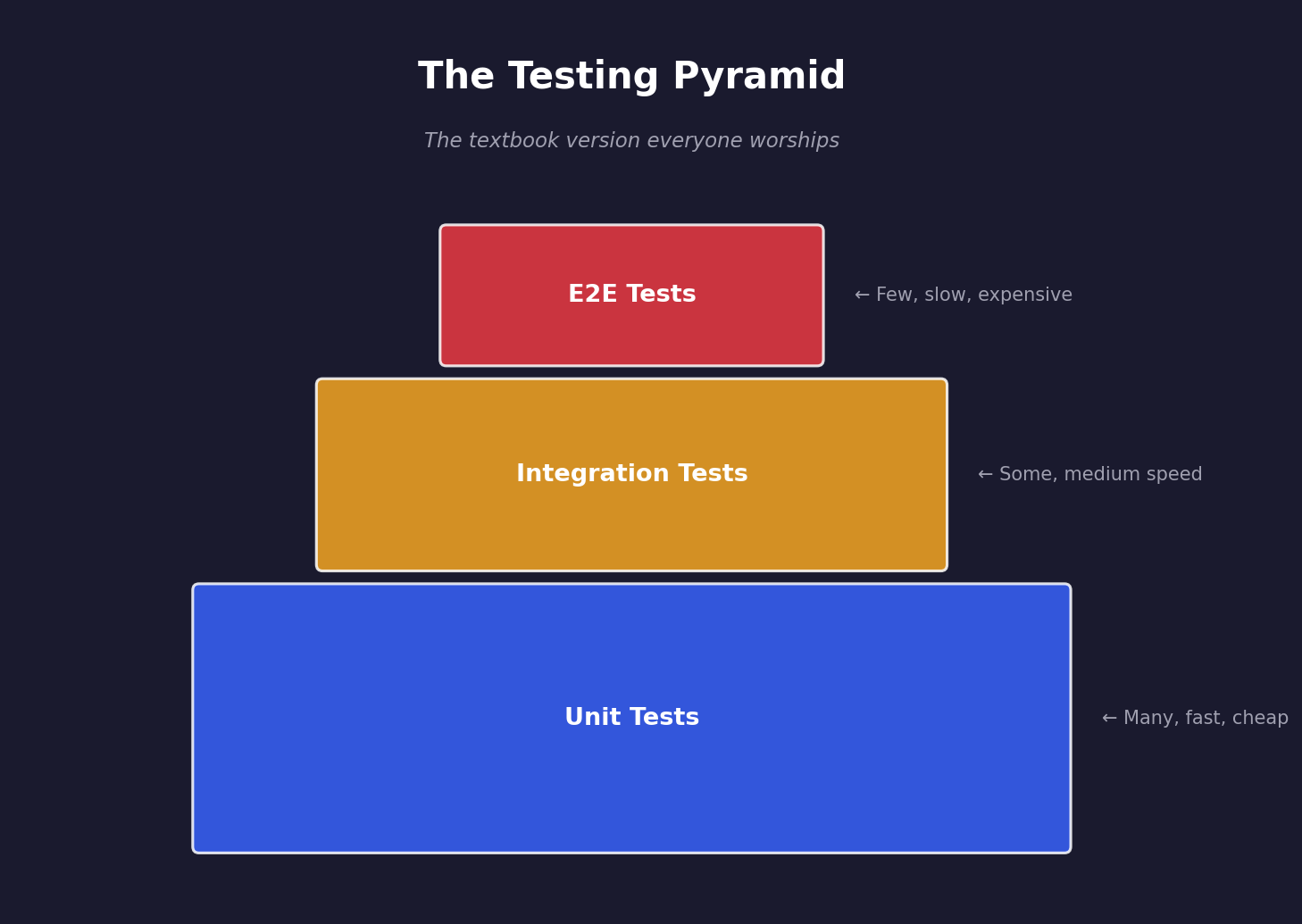

The Testing Pyramid. You've seen it. You've had it tattooed onto a conference slide. You've had it projected onto a wall during a meeting where someone used the phrase "shift left" without irony, while a Scrum Master nodded solemnly like they'd just witnessed a prophecy unfold. It looks like this:

The idea is elegant: lots of fast unit tests at the bottom, fewer integration tests in the middle, and a handful of end-to-end tests at the top. Fast feedback, low cost, maximum confidence.

There's just one problem.

Almost nobody's test suite actually looks like this. And for a surprising number of teams, that's completely fine.

🙃 The Inverted Reality

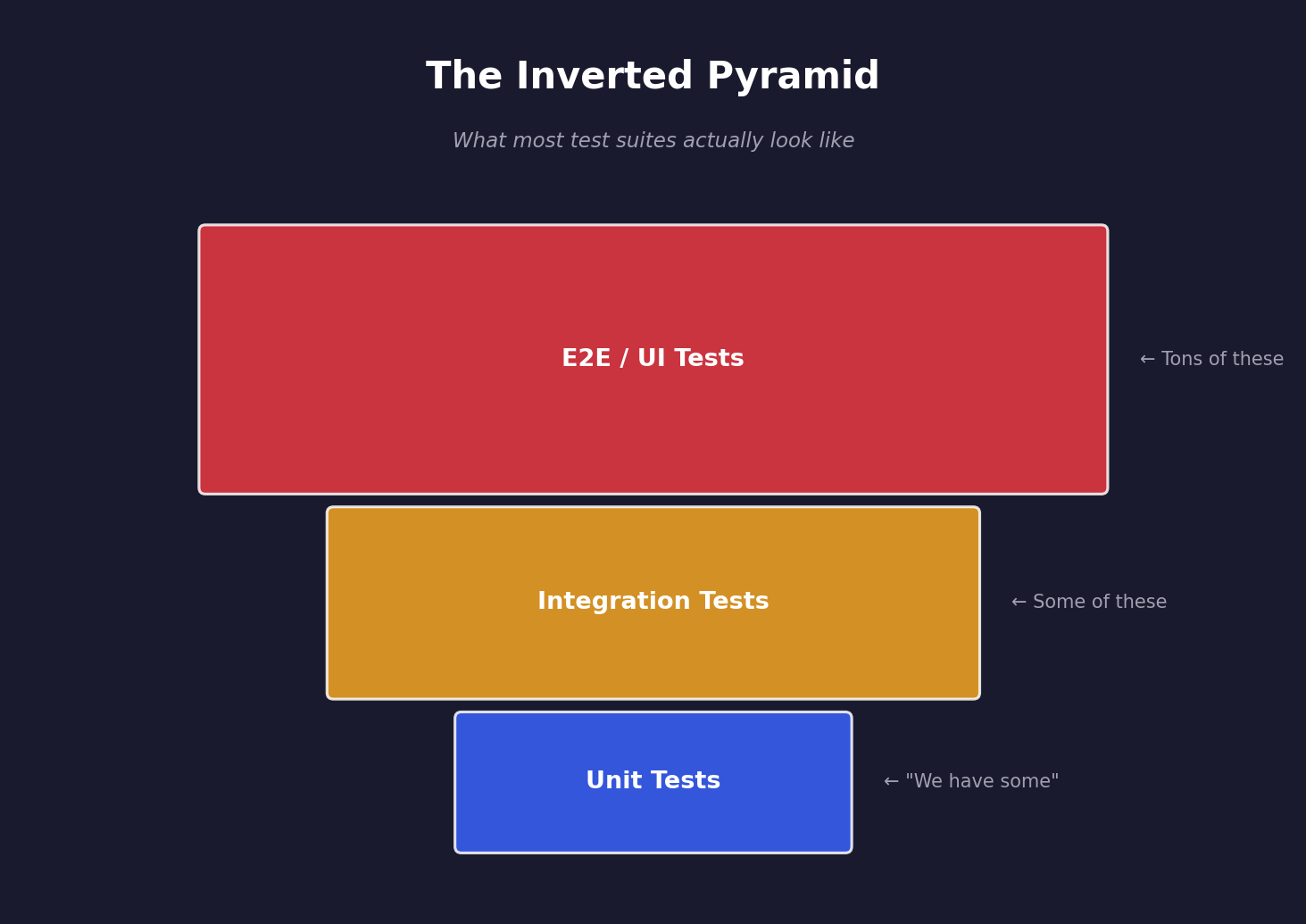

Here's what most test suites actually look like in the wild:

The Ice Cream Cone. The Inverted Pyramid. The Shape of Regret. The "oh god, who built this" formation.

If you've ever inherited a codebase where the CI pipeline takes 45 minutes because there are 800 Selenium tests and 12 unit tests, congratulations — you've met the ice cream cone in person. It probably didn't buy you dinner first. It just showed up, moved in, and started eating your build minutes.

But here's where it gets interesting: the reason teams end up here isn't always laziness or ignorance. Sometimes, the architecture genuinely makes unit testing harder than integration testing. Sometimes, the business risk lives at the UI layer and nowhere else. Sometimes, the "right" test distribution depends on what you're actually building, not what a triangle from 2009 says you should build.

Let's talk about when the pyramid is right, when it's wrong, and what to do instead of blindly following geometric shapes.

📐 Why the Pyramid Exists (And What It Gets Right)

Before we tear it apart, let's acknowledge what the pyramid gets genuinely right.

The original insight was about feedback speed and cost. Here's the math that makes the pyramid compelling:

| Property | Unit Tests | Integration Tests | E2E Tests |

|---|---|---|---|

| ⏱️ Execution time | 1-50ms each | 100ms-5s each | 5-60s each |

| 💰 Maintenance cost | Low | Medium | High |

| 🎯 Failure precision | Exact line of code | General area | "Something broke somewhere" |

| 🔄 Feedback loop | Seconds | Minutes | Minutes to hours |

| 🤝 External dependencies | None | Some (DB, cache) | Everything (browser, network, services) |

| 😤 Flakiness risk | Almost zero | Low-medium | "Is it Tuesday?" |

The pyramid says: maximize the cheap, fast, precise tests. Minimize the expensive, slow, vague ones.

That's genuinely good advice. If you can catch a bug with a unit test that runs in 3 milliseconds, why would you catch it with a Selenium test that takes 30 seconds and breaks every time Chrome updates?

The answer is: you wouldn't. Unless you can't write that unit test. And that's where things get complicated.

💀 The Three Lies Hidden in the Pyramid

Lie #1: "Unit Tests Catch the Bugs That Matter"

Unit tests are fantastic at verifying that individual functions do what they're supposed to do. They are terrible at verifying that your system works.

Here's an analogy. Imagine you're building a car:

- ✅ Unit test: "The engine produces 200 horsepower" — passes

- ✅ Unit test: "The transmission has 6 gears" — passes

- ✅ Unit test: "The brakes apply 500 Newtons of force" — passes

- ❌ Integration test: "The engine is connected to the transmission" — fails

- 💀 Production: Car doesn't move

Every component works perfectly in isolation. The car doesn't drive. This happens in software constantly.

Your OrderService.calculateTotal() is perfect. Your PaymentGateway.charge() is perfect. But the order service sends the total in cents while the payment gateway expects dollars. A unit test will never catch this. An integration test catches it in seconds.

The uncomfortable truth: In microservice architectures, the majority of production bugs live in the spaces between services, not inside them. The pyramid was designed for monoliths where most logic lives inside single functions.

Lie #2: "You Can Always Write Unit Tests"

The pyramid assumes your code is structured in a way that makes unit testing easy. This assumption is so heroically optimistic it belongs in a motivational poster hanging in a WeWork bathroom.

Real-world code that resists unit testing:

- Legacy systems where business logic is entangled with database calls, HTTP clients, and file I/O in the same method — you know, the method that's 400 lines long and has a comment at the top saying "TODO: refactor" dated 2017

- Orchestration layers that primarily coordinate other services — the logic IS the integration, and unit testing it means mocking the entire universe

- CRUD applications where the interesting behavior is "data flows correctly from API to database to response" — congratulations, your business logic is a glorified pipe

- UI-heavy applications where the business logic lives in the rendering layer, because someone thought putting calculations inside a React component was "pragmatic"

- Data pipelines where the value is in the transformation across stages, not individual steps

You can refactor all of these to be more testable. But refactoring takes time, introduces risk, and costs money. Sometimes "write an integration test that covers the actual behavior" is the pragmatic choice over "spend two sprints refactoring so we can write pure unit tests."

Lie #3: "More Unit Tests = More Confidence"

This is the sneakiest lie. You can have 95% code coverage from unit tests and still have zero confidence that your application works.

Here's why:

// Service with 100% unit test coverage

public class OrderService {

public BigDecimal calculateTotal(List<Item> items) {

return items.stream()

.map(item -> item.getPrice().multiply(BigDecimal.valueOf(item.getQuantity())))

.reduce(BigDecimal.ZERO, BigDecimal::add);

}

public void submitOrder(Order order) {

BigDecimal total = calculateTotal(order.getItems());

paymentClient.charge(order.getCustomerId(), total); // Mocked in tests

inventoryClient.reserve(order.getItems()); // Mocked in tests

notificationClient.sendConfirmation(order); // Mocked in tests

orderRepository.save(order); // Mocked in tests

}

}Your unit tests mock every dependency and verify that submitOrder calls them in order. 100% line coverage. Beautiful.

But your tests don't know that:

- 🔥

paymentClientexpects amounts in cents, not dollars - 🔥

inventoryClientthrows aRetryableExceptionthat your code doesn't handle - 🔥

notificationClienthas a 5-second timeout that causes the whole transaction to hang - 🔥

orderRepository.save()fails silently when the order total exceeds a database column's precision

All of those are integration-level bugs. Your 100% coverage unit test suite catches exactly zero of them. Your team deploys with confidence. Production catches fire. The CTO asks "but didn't we have tests?" and everyone stares at the floor.

Coverage measures how much code your tests execute. It says nothing about how much behavior your tests verify. It's the testing equivalent of measuring a restaurant's quality by how many plates they own.

🏗️ When the Pyramid Actually Works

The pyramid is a great fit when:

1. You're building a monolith with rich domain logic

If your application has complex business rules — financial calculations, eligibility engines, scheduling algorithms, state machines — then unit tests are your best friend. The logic is self-contained, has clear inputs and outputs, and benefits from hundreds of edge case tests.

Think: tax calculation engines, insurance underwriting logic, game physics.

2. Your codebase is well-structured for testability

If you've got clean separation of concerns, dependency injection, and thin integration layers, then unit testing is genuinely easy and valuable. The pyramid was designed for this world.

3. Your team is disciplined about test maintenance

Unit tests are low-maintenance individually, but 3,000 of them can become a maintenance nightmare if they're tightly coupled to implementation details.

🔥 When to Burn the Pyramid Down

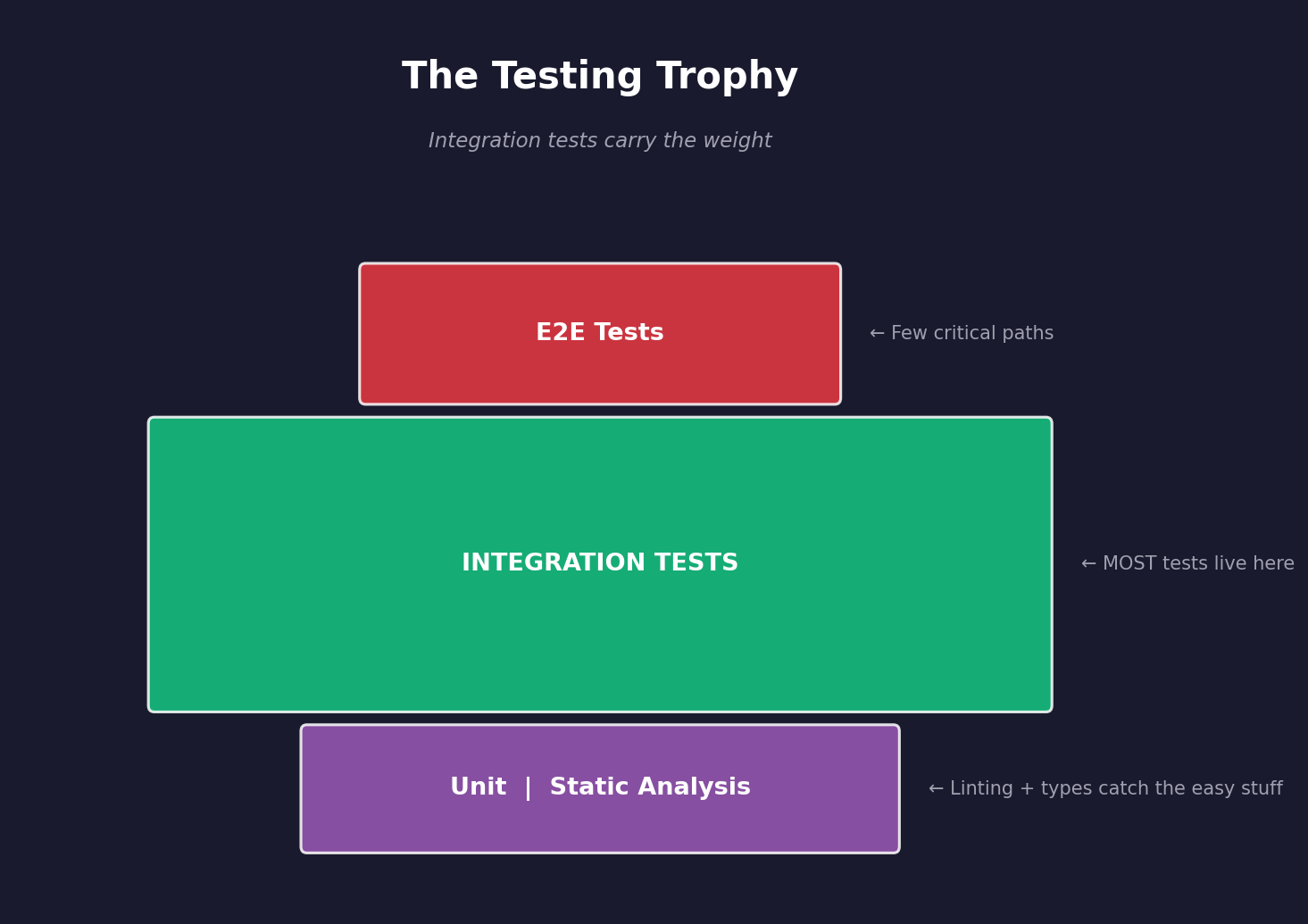

The Trophy 🏆

Kent C. Dodds proposed the "Testing Trophy" as an alternative that better fits modern web applications:

The key insight: integration tests give you the best ratio of confidence to cost for many modern architectures. They test real behavior with real dependencies (or close to it) without the brittleness of full E2E tests.

This works especially well for:

- Web APIs where the value is "request goes in, correct response comes out"

- Microservices where the interesting bugs are in the communication

- Applications with thin business logic but complex integration patterns

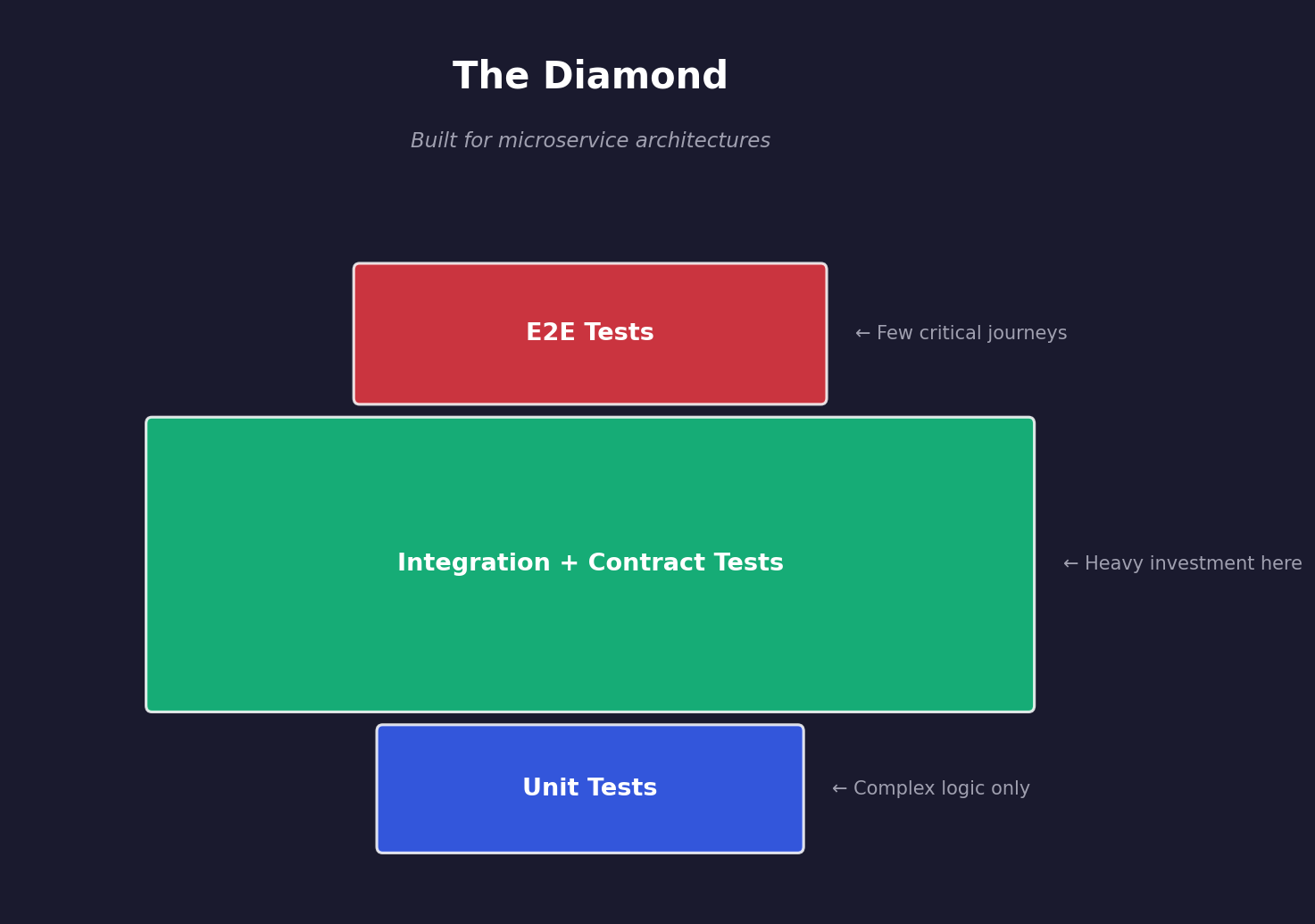

The Diamond 💎

For microservice architectures, some teams find the diamond shape works best:

Heavy investment in integration and contract testing, with unit tests only where the logic is genuinely complex. E2E tests cover only the critical happy paths.

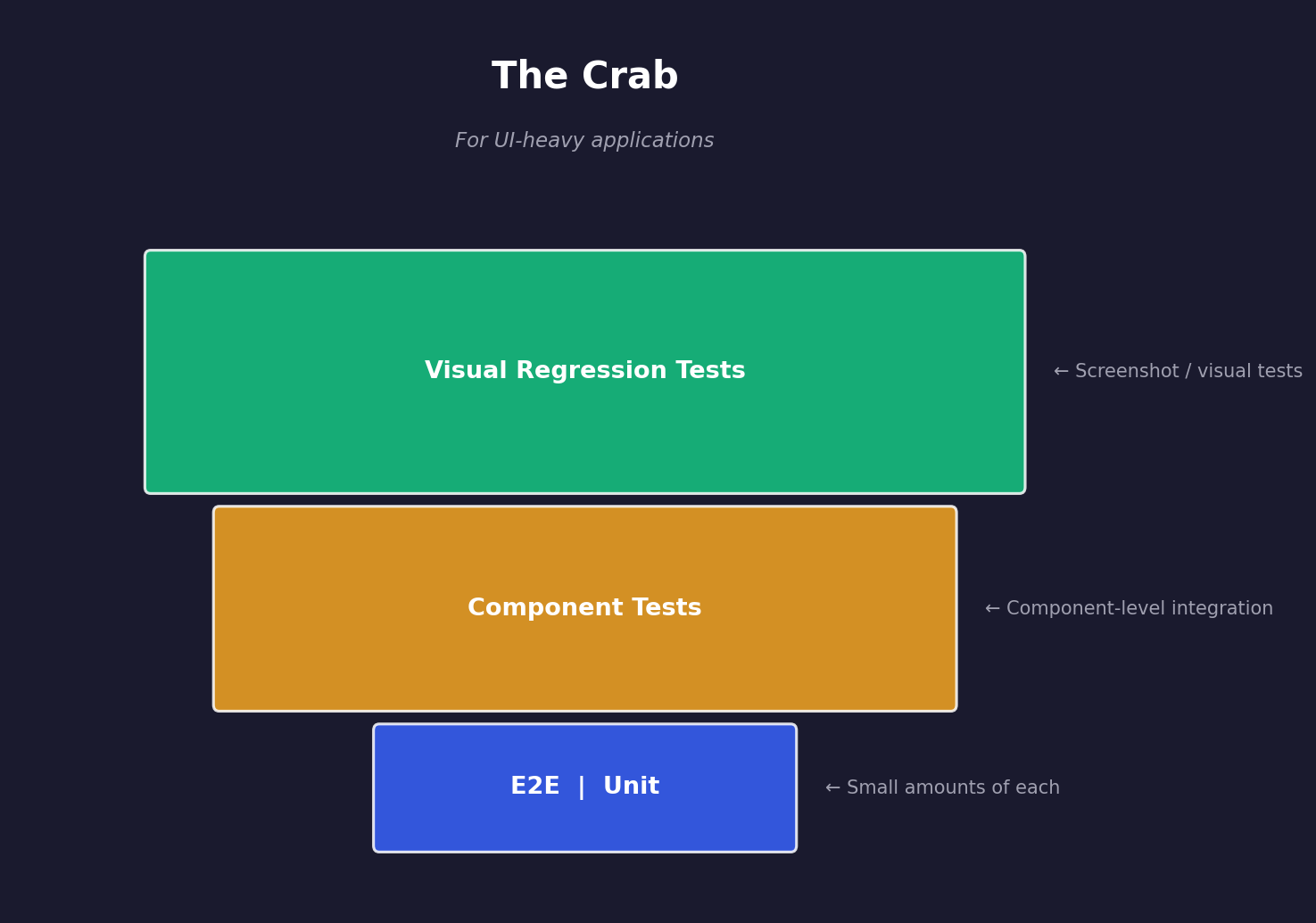

The Crab 🦀 (Yes, Really)

For UI-heavy applications (think: rich single-page apps, design systems, component libraries):

Visual regression testing and component-level tests carry most of the weight because the product IS the UI. A unit test on a React component that renders a button doesn't tell you if the button looks right or is in the right place.

🧮 Building Your Own Test Distribution

Enough theory. Here's a practical framework for deciding what your test distribution should look like.

Step 1: Map Where Your Bugs Actually Live

Before you write a single test, look at your last 6 months of production incidents. Categorize them:

| Bug Category | Example | Best Caught By |

|---|---|---|

| Logic errors | Wrong calculation, bad conditional | Unit test |

| Integration failures | Wrong API contract, serialization mismatch | Integration / Contract test |

| Data issues | Null values, encoding, timezone bugs | Integration test with real data |

| UI/UX bugs | Wrong layout, broken flow, missing element | E2E / Visual regression |

| Infrastructure | Timeout, connection pool, memory leak | E2E / Performance test |

| Configuration | Wrong env var, feature flag, deployment config | Smoke test / E2E |

If 80% of your production bugs are integration failures, your test suite should be 80% integration tests. The pyramid be damned.

This is the single most important step. Test where the risk actually is, not where a diagram says it should be.

Step 2: Assess Your Architecture's Testability

Be honest about what's easy to test and what's not:

| Your Architecture | Natural Test Shape |

|---|---|

| Monolith + rich domain logic | Classic pyramid 🔺 |

| Microservices + thin APIs | Diamond / trophy 💎🏆 |

| CRUD app + complex UI | Crab 🦀 |

| Legacy spaghetti code (no judgment, we've all been there) | Whatever you can get 🍝 |

| Data pipeline | Integration-heavy ◆ |

| Event-driven / async | Contract + integration 💎 |

Don't fight your architecture. If writing unit tests for your orchestration service requires mocking 12 dependencies and the test tells you nothing useful, stop doing it. Write an integration test that spins up the service with a test database and actually verifies the behavior.

Step 3: Define Your Confidence Equation

Not all tests contribute equally to your confidence. Define what "confident enough to deploy" means for your team:

Deployment Confidence =

(Critical path E2E ✅) +

(Integration coverage of main flows ✅) +

(Unit tests on complex logic ✅) +

(Contract tests between services ✅) +

(Static analysis green ✅)Some teams can deploy confidently with 20 E2E tests, 200 integration tests, and 50 unit tests. Others need 2,000 unit tests, 100 integration tests, and 10 E2E tests. Neither is wrong — they're building different things with different risk profiles.

Step 4: Optimize for Feedback Speed

Whatever shape you choose, organize your tests into feedback tiers:

| Tier | Tests | When They Run | Target Time |

|---|---|---|---|

| 🟢 Tier 1 | Unit + static analysis | Every commit, pre-push | < 2 minutes |

| 🟡 Tier 2 | Integration + contract | Every PR, CI pipeline | < 10 minutes |

| 🔴 Tier 3 | E2E + visual + performance | Pre-merge, nightly | < 30 minutes |

The goal: fast feedback on common problems, thorough feedback before deployment. A developer should never wait 30 minutes to find out they broke a unit test.

🔬 Practical Example: A Real Test Distribution

Let's make this concrete. Imagine you're building an e-commerce API — a Spring Boot service with a PostgreSQL database, a payment gateway integration, and a notification service.

Where the bugs live (from past incidents):

- 40% — Payment integration (wrong amounts, timeout handling, retry logic)

- 25% — Data integrity (null values, race conditions in inventory)

- 20% — Business logic (pricing rules, discount calculations, tax)

- 10% — API contract (wrong response format, missing fields)

- 5% — Configuration (wrong gateway URL, expired credentials)

The resulting test distribution:

// 📁 Test counts:

// Unit tests: ~60 (pricing, discounts, tax calculations)

// Integration tests: ~120 (DB operations, payment flows, full request/response)

// Contract tests: ~30 (API schemas, inter-service contracts)

// E2E tests: ~15 (critical purchase flows, checkout happy paths)

// Smoke tests: ~5 (health checks, config validation)

// Total: ~230 testsThat's roughly 26% unit, 52% integration, 13% contract, 7% E2E, 2% smoke.

Not a pyramid. Not a trophy. Just a shape that matches where the risk actually lives.

Unit tests — only for the genuinely complex logic:

@Test

void calculateTotal_appliesQuantityDiscount_above10Items() {

// This has real logic worth testing in isolation

List<OrderItem> items = List.of(

new OrderItem("WIDGET", new BigDecimal("9.99"), 15)

);

BigDecimal total = pricingService.calculateTotal(items);

// 15 items × $9.99 = $149.85, minus 10% quantity discount = $134.87

assertThat(total).isEqualByComparingTo("134.87");

}

@Test

void calculateTax_handlesMultipleJurisdictions() {

// Tax logic is genuinely complex — unit tests earn their keep here

Order order = OrderFixture.withShippingTo("TX");

order.addItem("DIGITAL_GOOD", new BigDecimal("100.00"));

order.addItem("PHYSICAL_GOOD", new BigDecimal("50.00"));

TaxBreakdown tax = taxService.calculate(order);

// Texas: no tax on digital goods, 6.25% on physical

assertThat(tax.getDigitalTax()).isEqualByComparingTo("0.00");

assertThat(tax.getPhysicalTax()).isEqualByComparingTo("3.13");

}Integration tests — the heavy lifters:

@SpringBootTest

@Testcontainers

class OrderIntegrationTest {

@Container

static PostgreSQLContainer<?> postgres = new PostgreSQLContainer<>("postgres:15");

@Container

static WireMockContainer paymentGateway = new WireMockContainer();

@Test

void submitOrder_chargesPaymentAndPersistsOrder() {

// This tests the REAL behavior: HTTP → Service → DB → External API

paymentGateway.stubFor(post("/charge")

.willReturn(ok().withBody("{\"transactionId\": \"tx-123\"}")));

var response = restClient.post("/api/orders")

.body(new CreateOrderRequest("customer-1", List.of(

new OrderItemRequest("WIDGET", 2, "9.99")

)))

.exchange();

assertThat(response.getStatusCode()).isEqualTo(HttpStatus.CREATED);

// Verify the payment was charged with the CORRECT amount

paymentGateway.verify(postRequestedFor(urlEqualTo("/charge"))

.withRequestBody(matchingJsonPath("$.amount", equalTo("1998"))));

// ^^^ cents, not dollars — this is where the real bugs live

// Verify the order was persisted correctly

Order saved = orderRepository.findByCustomerId("customer-1").orElseThrow();

assertThat(saved.getStatus()).isEqualTo(OrderStatus.CONFIRMED);

assertThat(saved.getTotalCents()).isEqualTo(1998);

}

@Test

void submitOrder_handlesPaymentTimeout_gracefully() {

// This is the test that prevents the 3am incident

paymentGateway.stubFor(post("/charge")

.willReturn(ok().withFixedDelay(6000))); // 6 second delay

var response = restClient.post("/api/orders")

.body(validOrderRequest())

.exchange();

assertThat(response.getStatusCode()).isEqualTo(HttpStatus.SERVICE_UNAVAILABLE);

// Verify order is in PENDING state, not CONFIRMED

Order saved = orderRepository.findByCustomerId("customer-1").orElseThrow();

assertThat(saved.getStatus()).isEqualTo(OrderStatus.PAYMENT_PENDING);

}

}These integration tests would catch 65% of the bugs from the incident history. The unit tests on pricing logic would catch another 20%. Together, that's 85% of real production bugs covered.

🚫 The Anti-Patterns (Things That Actually Hurt)

Regardless of what shape your test suite takes, these patterns will sabotage you:

🧟 Zombie Tests

Tests that pass but verify nothing useful. Usually created when someone mocked every dependency and then asserted that the mocks were called. These provide coverage numbers without confidence.

The fix: If you can change the implementation without any test failing, the tests aren't testing behavior.

🎭 Test Theatre

A massive test suite that takes 45 minutes to run, so nobody runs it locally, so everybody ignores it when it fails in CI, so it becomes permanently red, so it provides zero value. But the dashboard exists, so management thinks quality is under control. Everyone is happy. Nothing is tested.

The fix: If your CI is red for more than 24 hours and nobody cares, your tests aren't a safety net — they're furniture. Expensive furniture that slows down your deployments.

🪞 Mirror Tests

Unit tests that are exact copies of the implementation, just with different variable names. They break every time you refactor, even when the behavior hasn't changed.

// The implementation:

public int add(int a, int b) { return a + b; }

// The mirror test (worthless):

@Test

void add_returnsSumOfTwoNumbers() {

assertEquals(a + b, calculator.add(a, b)); // You just rewrote the method

}The fix: Test behavior and outcomes, not implementation details. "When I add items to a cart, the total is correct" — not "the add method calls the sum function."

🏔️ The Mountain of Mocks

Tests where 90% of the code is setting up mocks and 10% is the actual assertion. If your test setup is longer than the code being tested, you're probably testing at the wrong level.

The fix: If you need 15 mocks to test a method, either the method does too much (refactor it) or you should test it as an integration (test the real behavior).

✅ The Decision Framework

When you're staring at a feature and wondering what kind of test to write, ask yourself these questions in order:

1. Is there complex logic that can be tested with pure inputs and outputs? → Yes → Write a unit test. This is what unit tests are for.

2. Does the behavior involve communication between components? → Yes → Write an integration test. Test the communication, not the components.

3. Is this a critical user journey that spans the entire system? → Yes → Write an E2E test. But only for the critical paths.

4. Does this involve an API contract that another team depends on? → Yes → Write a contract test. Prevent breaking your consumers.

5. Is this visual behavior that can only be verified by looking at it? → Yes → Write a visual regression test. Screenshots don't lie.

6. Is this a configuration or infrastructure concern? → Yes → Write a smoke test. Verify the environment, not the logic.

If you apply these questions honestly, you'll end up with a test distribution that fits your architecture. It might be a pyramid. It might be a diamond. It might be some shape that doesn't have a name. That's fine.

🎯 What Actually Matters

The Testing Pyramid isn't wrong. It's incomplete.

It was a useful heuristic for monolithic applications in 2009. It's still useful for certain architectures today. But treating it as a universal law leads to test suites that are either:

- ❌ Full of unit tests that don't catch real bugs, or

- ❌ Completely ignored because someone felt guilty about not having "enough" unit tests

Here's what you should focus on instead:

Test where your bugs actually live. Look at your incident history. That's your test distribution roadmap.

Optimize for confidence, not coverage. 70% coverage with the right tests beats 95% coverage with the wrong ones.

Match your tests to your architecture. Microservices need integration tests. Rich domains need unit tests. UIs need visual tests. It's not one-size-fits-all.

Organize for feedback speed. Fast tests run on every commit. Slow tests run before deployment. Nobody waits 30 minutes to find out they broke a typo.

Name your shape honestly. If your test suite is a diamond, own it. If it's an ice cream cone, at least know why it's an ice cream cone and whether that's actually a problem.

The best test suite isn't the one that matches a geometric shape. It's the one that lets your team deploy on Friday afternoon without checking Slack at midnight.

And if your tests let you do that, the pyramid can stay in the textbooks where it belongs. Right next to "Waterfall is the standard methodology" and "Microservices simplify everything."

Now go look at your CI pipeline. What shape is your test suite? More importantly — is it the right shape for what you're building? If not, you've got work to do. And this time, start by looking at your incident history, not a geometry lesson.